Found a GitHub repo that solves conventional compute limits in the opposite way everyone else does, and it’s actually genius. Cortical Labs recently commercialized the CL1, a $35,000 biological computer running 200,000 living human neurons in a nutrient solution. They famously taught it to play Pong and DOOM. But an independent dev just took their API and wired the neurons straight into a local LLM. The system uses the biological firing of the cells to dictate the token generation of the AI. It’s the ultimate middle finger to billion‑dollar GPU clusters.

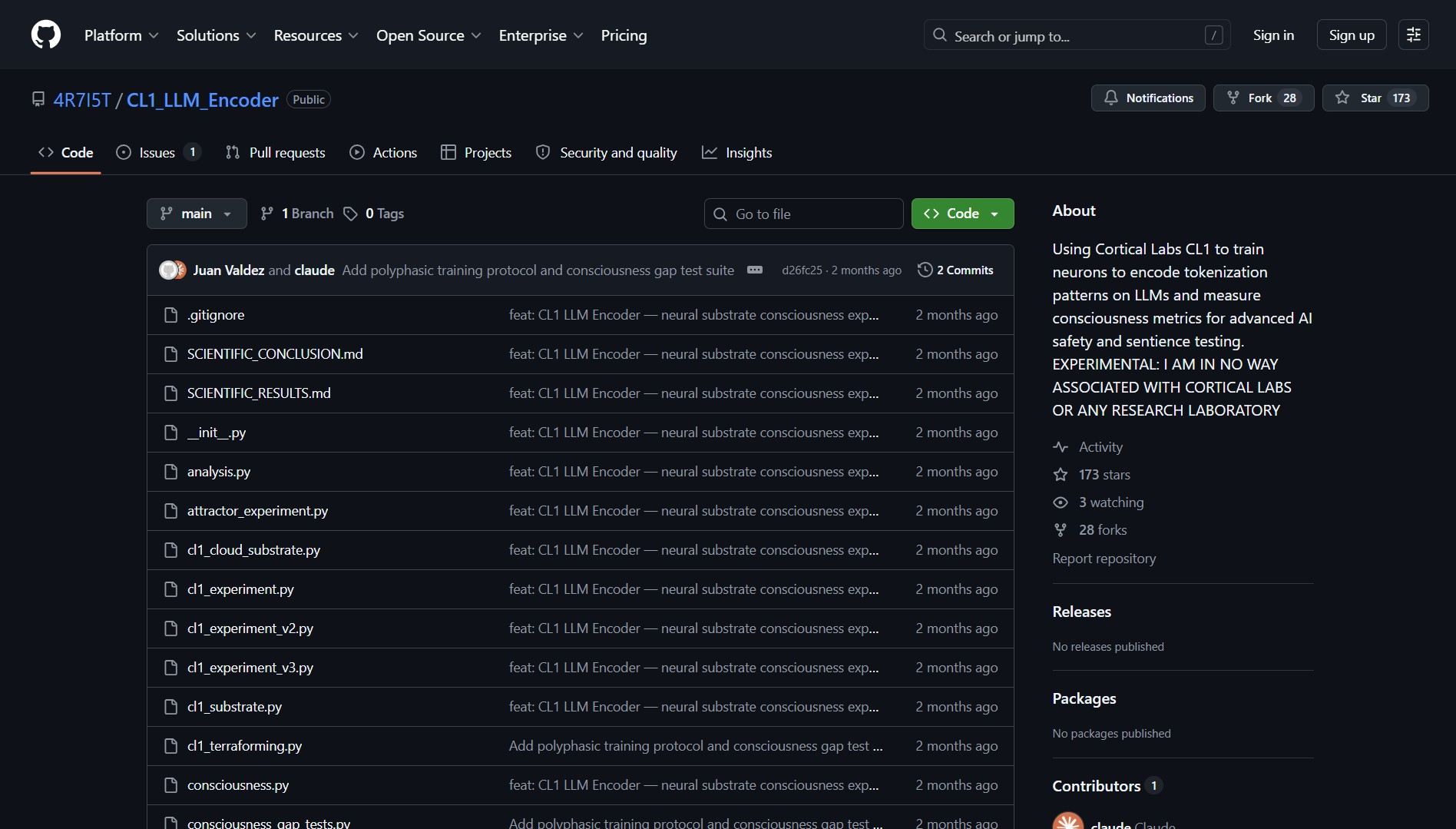

CL1_LLM_Encoder GitHub repository homepage

CL1_LLM_Encoder GitHub repository homepage

CL1_LLM_Encoder is for experimental AI researchers and hackers, solving the problem of conventional compute limits by exploring how biological neurons can influence LLM token generation. This project bridges the gap between wetware (biological computing) and software (large language models), creating a hybrid system where living cells directly influence AI output.

What Is CL1 LLM Encoder?

CL1 LLM Encoder is an experimental interface that connects Cortical Labs’ CL1 biological computer , which contains 200,000 living human neurons in a nutrient‑rich medium , to a local large language model. Instead of using silicon‑based processors to generate tokens, the system uses the spontaneous firing patterns of biological neurons to influence or dictate which tokens the LLM produces. This creates a fundamentally different approach to AI computation that blends organic and digital intelligence.

[!WARNING] This is experimental research, not production‑ready technology. The CL1 biological computer costs $35,000 and requires specialized biological maintenance. The interface demonstrates a concept rather than providing a practical alternative to GPU‑based inference.

How It Works: Biological‑Digital Hybrid Architecture

| Component | Role | Implementation |

|---|---|---|

| Cortical Labs CL1 | Biological computing substrate | 200,000 living human neurons in nutrient solution |

| Neural Activity API | Interface to neuron firing patterns | REST/WebSocket API provided by Cortical Labs |

| Token Encoding Layer | Translates neural spikes to token probabilities | Custom algorithm mapping firing rates to vocabulary |

| Local LLM | Language model influenced by biological input | Hugging Face transformers or similar local model |

| Feedback Loop | Optional system to adjust based on output | Can modify stimulation or interpretation based on results |

Technical Implementation Details

- API Integration: Uses Cortical Labs’ official API to access real‑time neural activity data.

- Token Probability Mapping: Converts neuron firing rates and patterns into probability distributions over the LLM’s vocabulary.

- Real‑Time Influence: Neural activity continuously adjusts token selection during generation, not just as initial seed.

- Local Processing: Entire pipeline runs locally, avoiding cloud dependencies and maintaining experimental control.

- Open Source Framework: Full code available for modification and extension by other researchers.

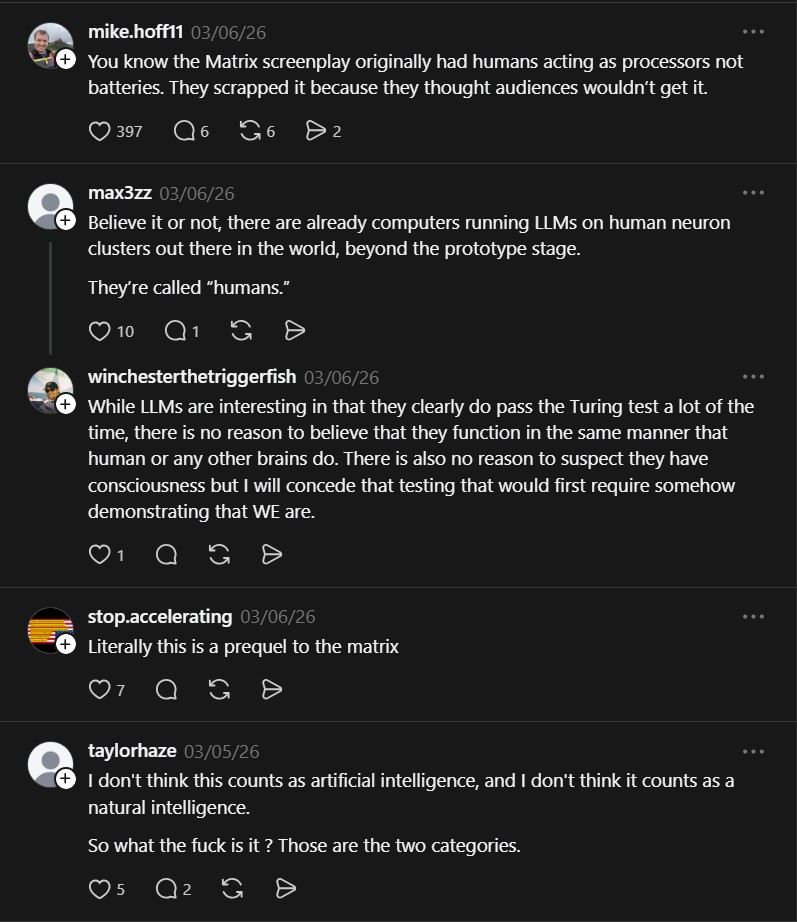

Community discussion about the biological vs computational aspects

Community discussion about the biological vs computational aspects

Community Reactions & Philosophical Implications

“While using an API connected to living cells is interesting it is in no way the same as having the living cells running an LLM” , @joshwoodthehuman

“Try using a hex lattice based algorithm with the LLM. After this start asking it deep and philosophical questions.” , @erick.m.95

These comments capture the spectrum of reactions: from skepticism about the true biological nature of the computation to excitement about philosophical experiments. The first highlights an important distinction , the neurons aren’t “running” the LLM in a traditional sense but influencing its output. The second suggests intriguing experimental directions , using the hybrid system to explore consciousness‑related questions.

Additional community discussion about experimental approaches

Additional community discussion about experimental approaches

Why This Changes Experimental AI Research

CL1 LLM Encoder represents more than just a technical experiment , it’s a philosophical statement about the nature of computation and intelligence. By creating a bridge between biological and artificial intelligence, it opens several new research directions:

- Alternative Compute Paradigms: Explores whether biological systems can complement or enhance silicon‑based AI.

- Consciousness Studies: Provides a tool for investigating whether hybrid systems exhibit novel properties.

- AI Ethics Frontier: Raises questions about the moral status of AI‑neuron hybrids and their treatment.

- Reduced Energy Computing: Biological neurons operate at dramatically lower energy than GPUs, hinting at future efficiency possibilities.

- Decentralized AI: Points toward a future where AI computation might be distributed across diverse substrates, not just data centers.

Experimental Stack

If biological tokens aren’t your thing but you still want insane local inference, Flash-moe streams 397B models from your SSD. To brush up on the actual math and physics behind these systems, Math Science Video Lectures has the Ivy League curriculum open-sourced.

Project Link

This project proves that sometimes the most valuable research isn’t about incremental improvements but about radical reimagining of fundamental assumptions. By asking “what if AI tokens came from living neurons instead of silicon?” it challenges our entire conception of what computation is and where intelligence might emerge.