Opened this GitHub repo expecting the usual boilerplate, walked away thinking about how elegant the solution actually is. Google just dropped another banger: they open‑sourced the Agent Development Kit, and it perfectly pairs with Gemini 3.1 Flash‑Lite. This means you can now build always‑on AI Agents that run 24/7 at a negligible cost. 100% open source.

Google’s always‑on memory agent GitHub repository

Google’s always‑on memory agent GitHub repository

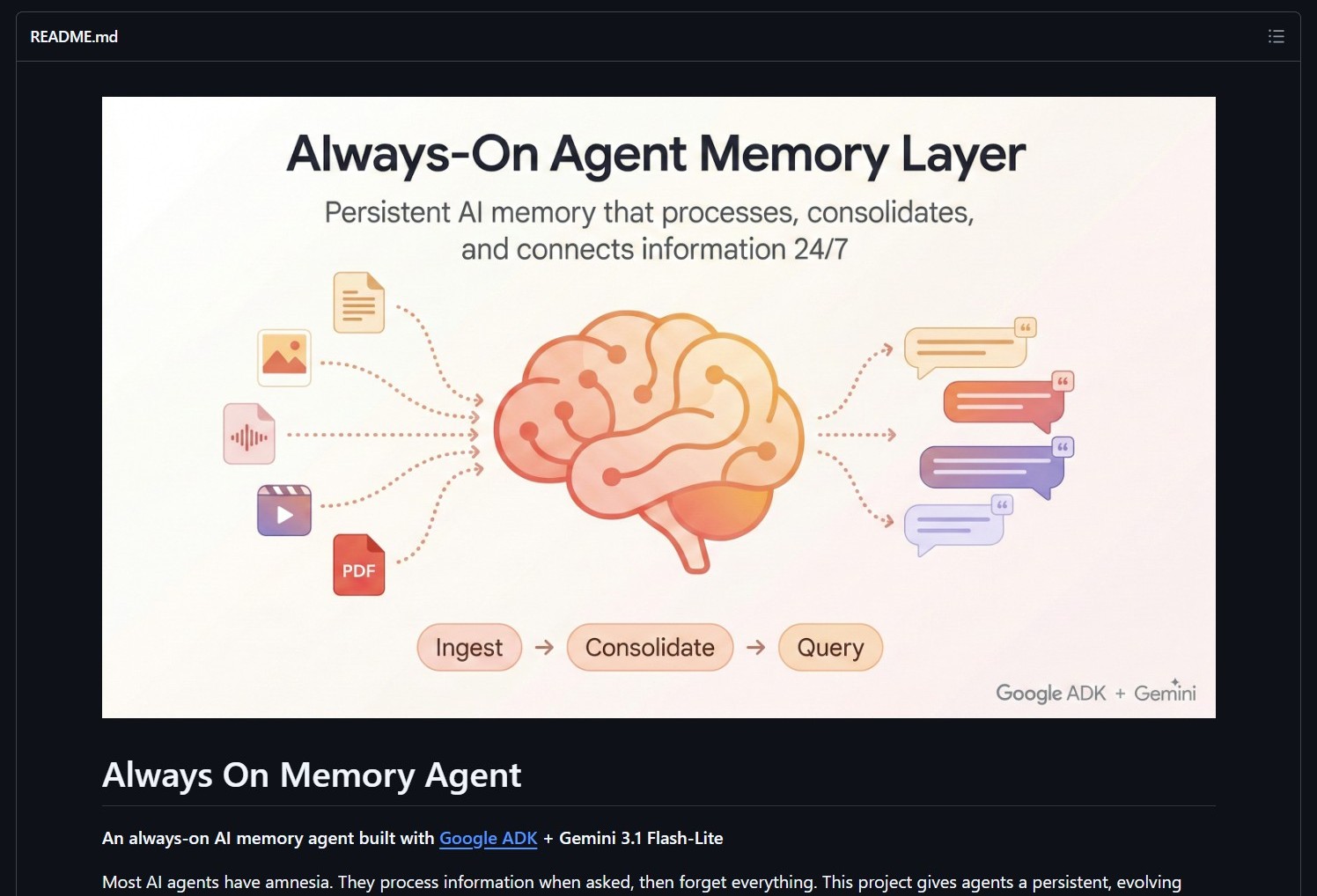

Google’s always‑on memory agent is for developers building persistent AI systems, solving the problem of stateless agents by enabling continuous memory that evolves over time without complex infrastructure. Most AI agents have amnesia. They process information when asked, then forget everything. This project gives agents a persistent, evolving memory that runs 24/7 as a lightweight background process, continuously processing, consolidating, and connecting information.

What Is Google’s Always‑On Memory Agent?

The always‑on memory agent is an open‑source implementation built with Google’s Agent Development Kit (ADK) and Gemini 3.1 Flash‑Lite. Unlike traditional agents that process requests in isolation and forget everything afterward, this agent maintains a persistent memory that grows and evolves over time, running continuously as a background process with minimal resource consumption.

[!NOTE] The most revolutionary aspect: “No vector database. No embeddings. Just an LLM that reads, thinks, and writes structured memory.” This simplicity is what makes the negligible cost possible.

How It Works: No Vector Databases, Just LLM Memory

| Traditional Approach | Google’s Always‑On Approach |

|---|---|

| Vector Databases | Stores embeddings in specialized databases (Pinecone, Weaviate, etc.) |

| Embedding Models | Requires separate embedding models to convert text to vectors |

| Stateless Processing | Each interaction starts fresh, no memory between sessions |

| High Infrastructure Cost | Database hosting, embedding API costs, complex architecture |

| Complex Retrieval | Vector similarity search with potential relevance issues |

| Google’s Always‑On Approach | |

|---|---|

| Direct LLM Memory | The LLM itself maintains structured memory without external databases |

| Continuous Processing | Background thread constantly reads, thinks, and updates memory |

| Persistent State | Memory evolves over time, retaining context indefinitely |

| Negligible Cost | Gemini 3.1 Flash‑Lite is optimized for continuous, low‑cost operation |

| Simple Architecture | No external dependencies beyond the LLM itself |

Technical Implementation

- Agent Development Kit (ADK): Google’s framework for building production‑ready AI agents.

- Gemini 3.1 Flash‑Lite: Optimized for cost‑effective, continuous operation with strong reasoning capabilities.

- Structured Memory: The LLM organizes information in a coherent, queryable format without vector embeddings.

- Background Processing: Runs as a lightweight service, constantly updating memory without blocking user interactions.

- Open Source: Full implementation available on GitHub under Apache 2.0 license.

Community discussion about the economic implications

Community discussion about the economic implications

Community Impact & Economic Breakthrough

“the negligible cost part is what really unlocks things , we’ve been running some 24/7 monitoring agents and the economics finally make sense. curious what kind of agents people will build first with this” , @akhomyakov

This comment hits the core innovation: previous attempts at persistent agents were technically possible but economically impractical. With Gemini 3.1 Flash‑Lite’s cost structure and this architecture, 24/7 agents become financially viable for the first time.

Why This Changes Agent Development

Google’s always‑on memory agent represents a paradigm shift in what’s economically and technically possible with AI agents:

- Economic Viability: Makes 24/7 agents affordable for startups, indie developers, and experimental projects.

- Architectural Simplicity: Eliminates the complexity of vector databases and embedding pipelines.

- True Persistence: Agents that genuinely learn and remember over time, not just within a single session.

- Background Intelligence: Continuous processing enables proactive agents that notice patterns and act without explicit prompts.

- Accessibility: Open‑source implementation lowers the barrier to building sophisticated agent systems.

[!TIP] Pairs well with: Claude-peers-mcp — sync context between multiple agent sessions in real-time.

Project Link

This project proves that sometimes the most elegant solution is also the simplest. By returning to first principles , asking “what do we actually need agents to remember, and what’s the simplest way to achieve that?” , Google has created an architecture that’s both more capable and dramatically cheaper than the complex vector‑database approaches that dominated the space.