Local AI & Performance Optimization

Run massive AI models on your own hardware. Deep dives into Metal shaders, Apple Silicon optimization, and memory streaming for edge-inference efficiency.

AI isn’t just for massive data centers. With the right optimization, you can now run 400B parameter models on a consumer laptop. We track the latest breakthroughs in Local AI performance and hardware-aware inference.

Why Run AI Locally?

- Privacy: Your proprietary code and sensitive data never leave your local machine.

- Zero Ingress/Egress Costs: No moon-high API bills or token-based pricing.

- Low Latency: Instant inference without network overhead, crucial for real-time coding assistants.

Technical Focus Areas

Apple Silicon & Metal Shaders

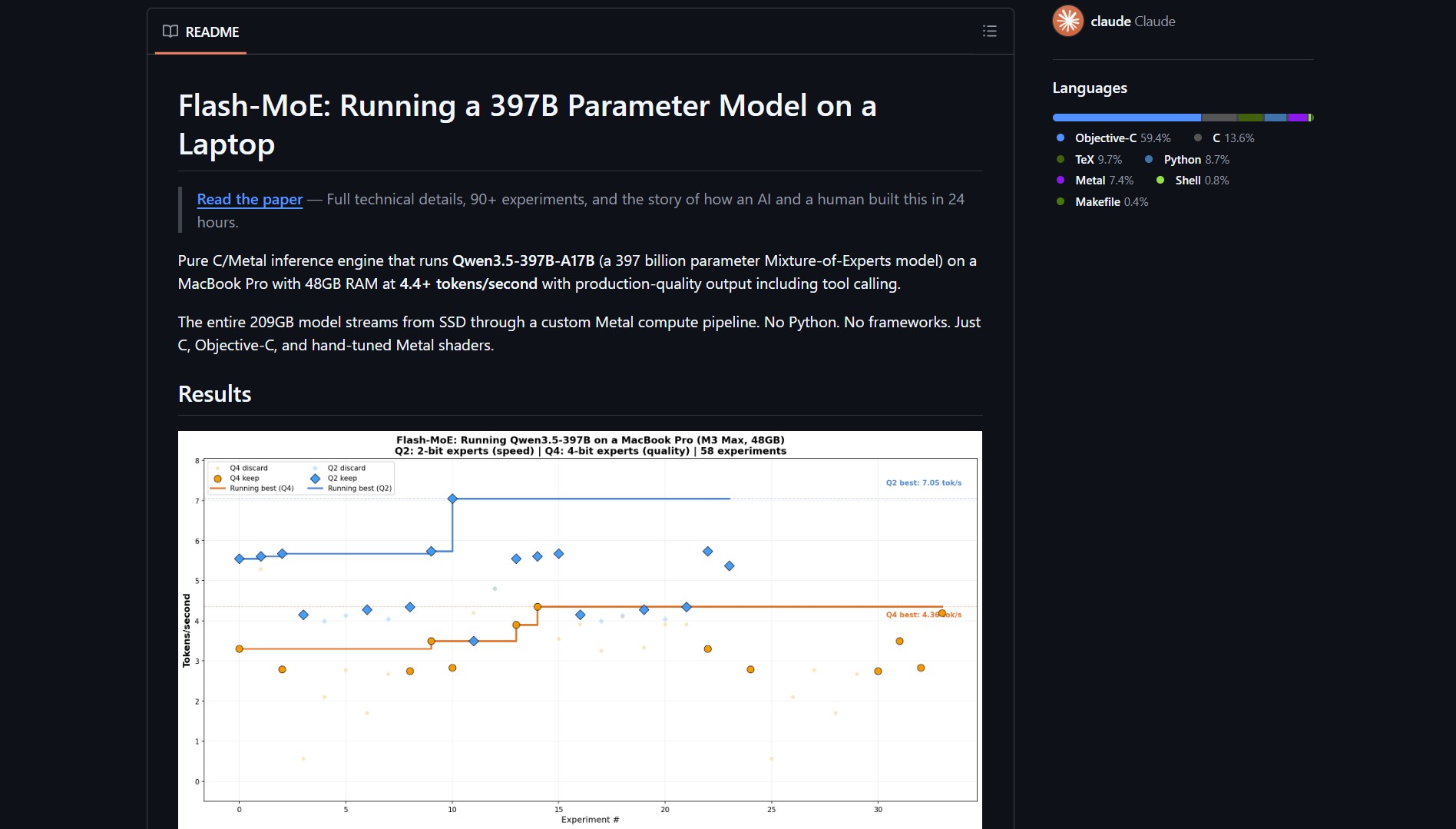

Unlocking the full potential of M1, M2, and M3 chips. Projects like Flash-moe prove that Apple’s Unified Memory architecture is a powerhouse for streaming Mixture-of-Experts (MoE) models directly from SSD to GPU.

Intelligent Memory Hierarchy

Novel techniques that allow for running models far larger than the physical RAM available. By leveraging fast SSD bandwidth and per-layer activation, indie devs can now run world-class models without server racks.

CLI-First AI Tools

Lightweight, terminal-native tools that integrate AI directly into your hotswapping dev cycles without the bloat of Electron-based wrappers.

[!NOTE] Running local AI demands specific hardware profiles (especially VRAM/Unified Memory), but modern memory-streaming techniques are rapidly lowering the barrier to entry.

Explore the latest in high-performance local AI:

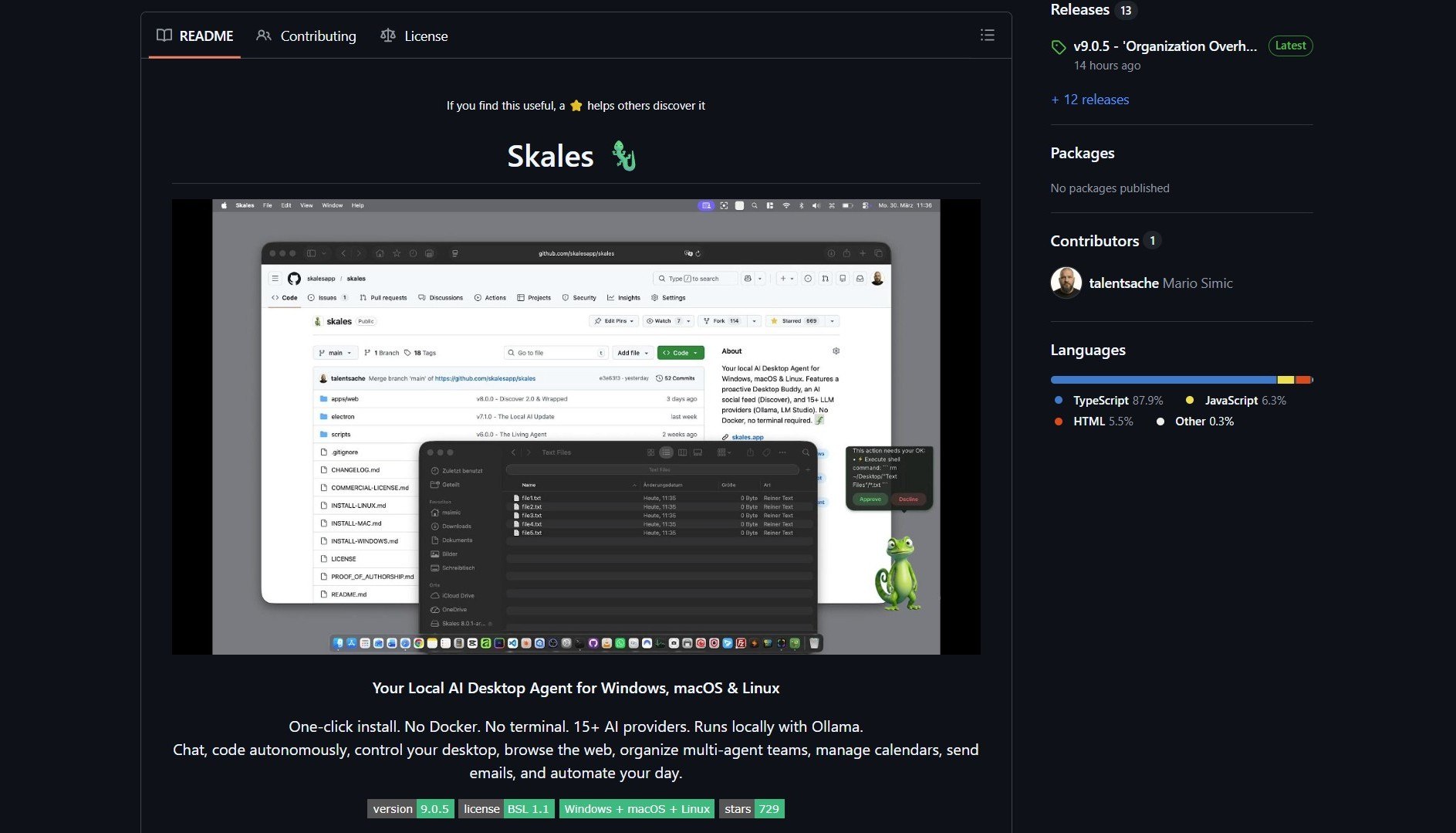

Skales: AI Desktop Assistant Without Docker or Complexity

Stumbled across a repo that does something so obvious I’m shocked it wasn’t everywhere already. A developer spent ...

AI Assistant · Desktop App

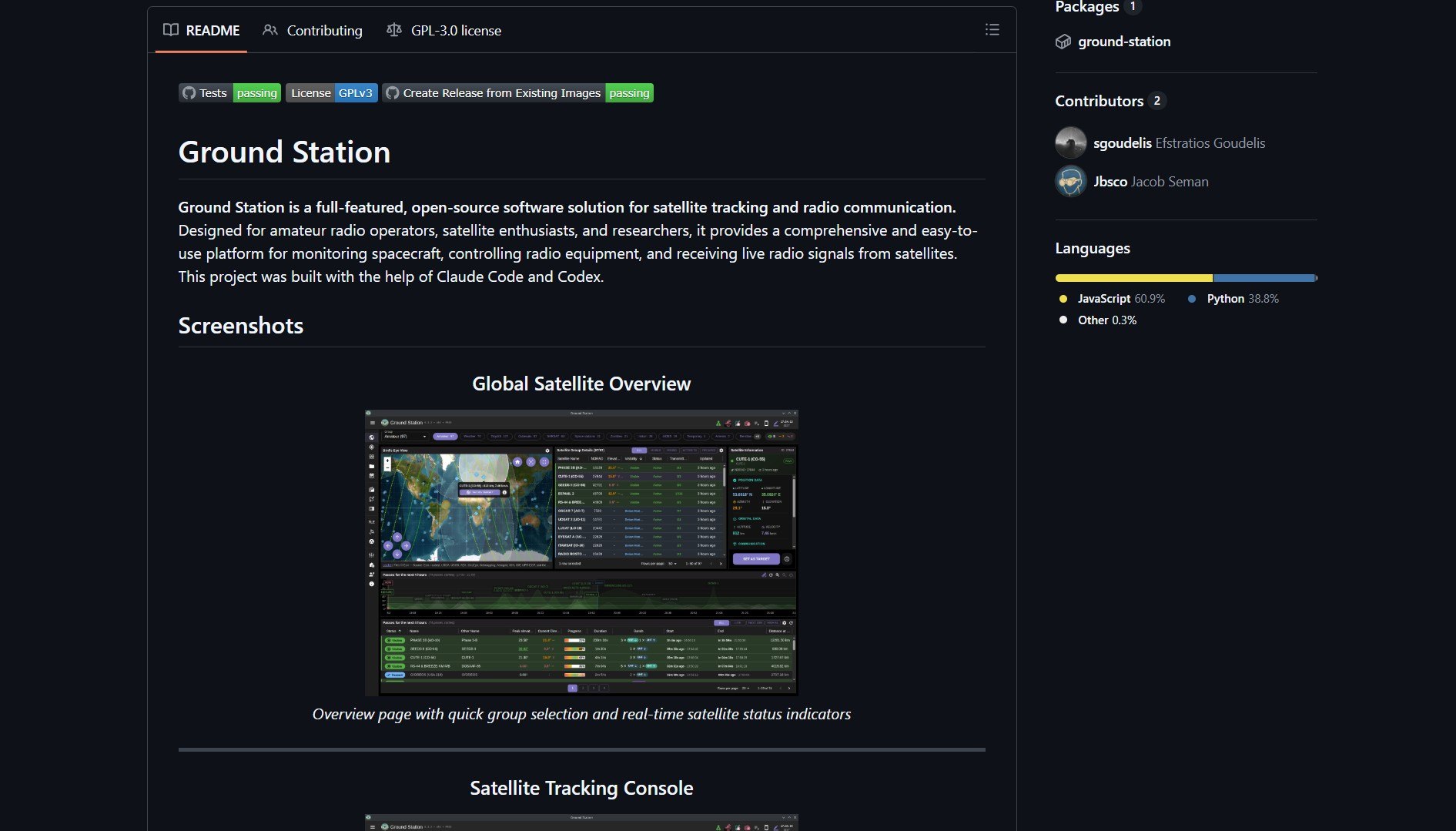

Ground Station: Open Source Satellite Tracking and Signal Decoding

Ground Station is a unified, open‑source suite for satellite tracking and signal decoding, allowing users to pull wea...

Satellite · Open Source

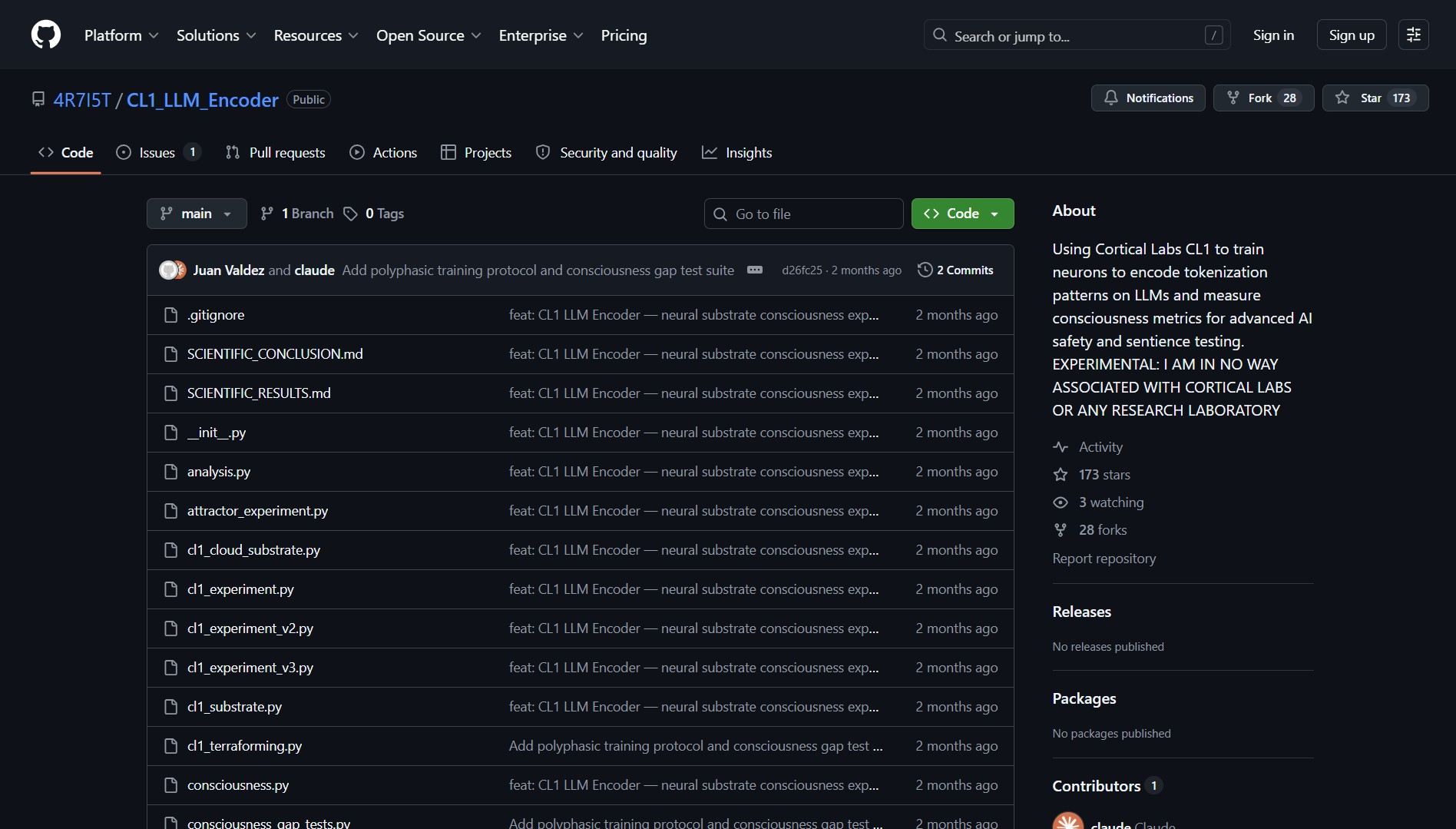

CL1 LLM Encoder: Biological Neurons Control LLM Token Generation

CL1 LLM Encoder is an experimental interface connecting biological neurons to LLMs, using living cells to influence a...

Biological Computing · AI

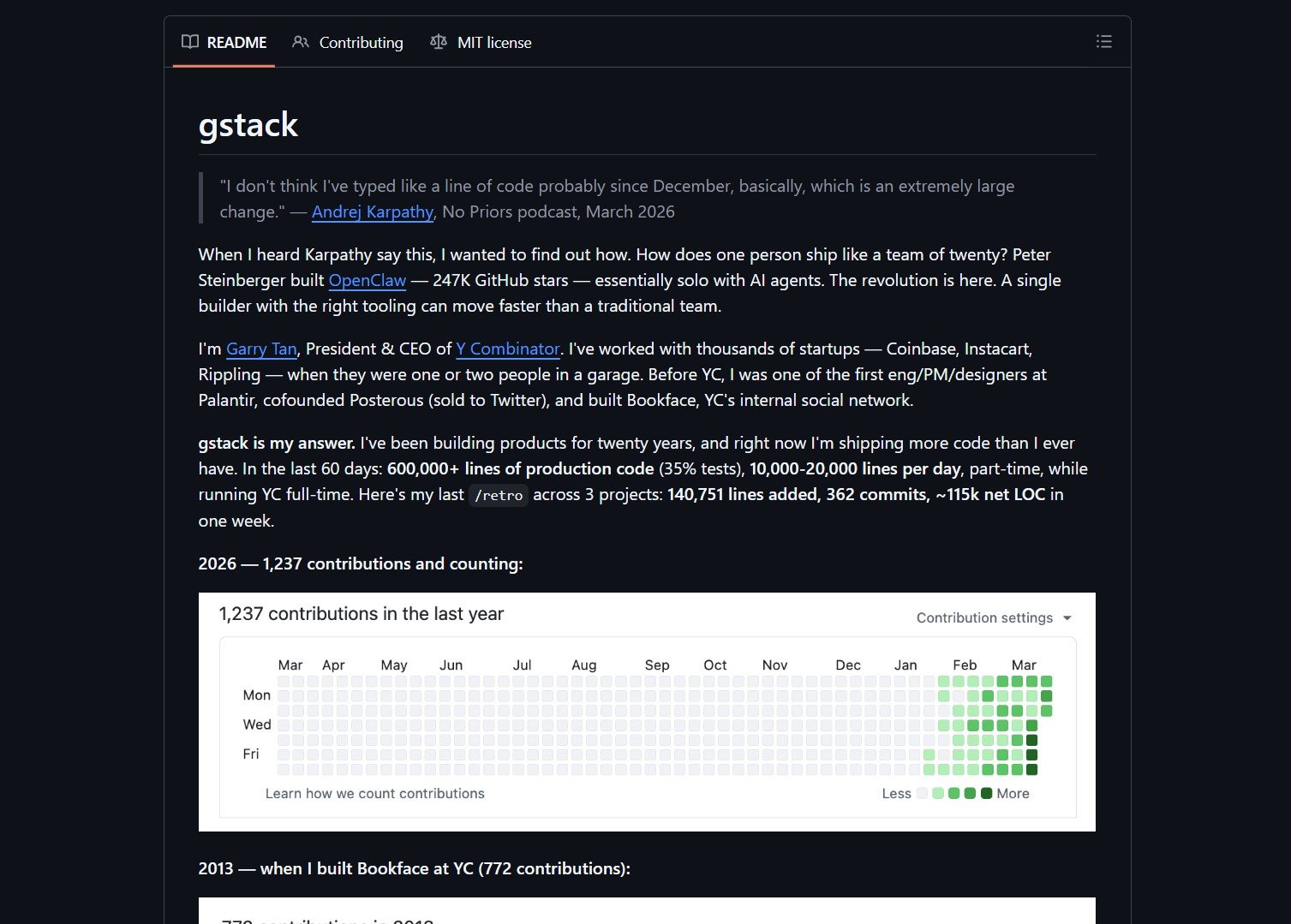

Gstack: YC CEO's AI Orchestration for Software Development

I fell into a GitHub repo yesterday that solves a problem I didn’t even know was draining my time, and now I can’t...

AI Orchestration · Claude Code

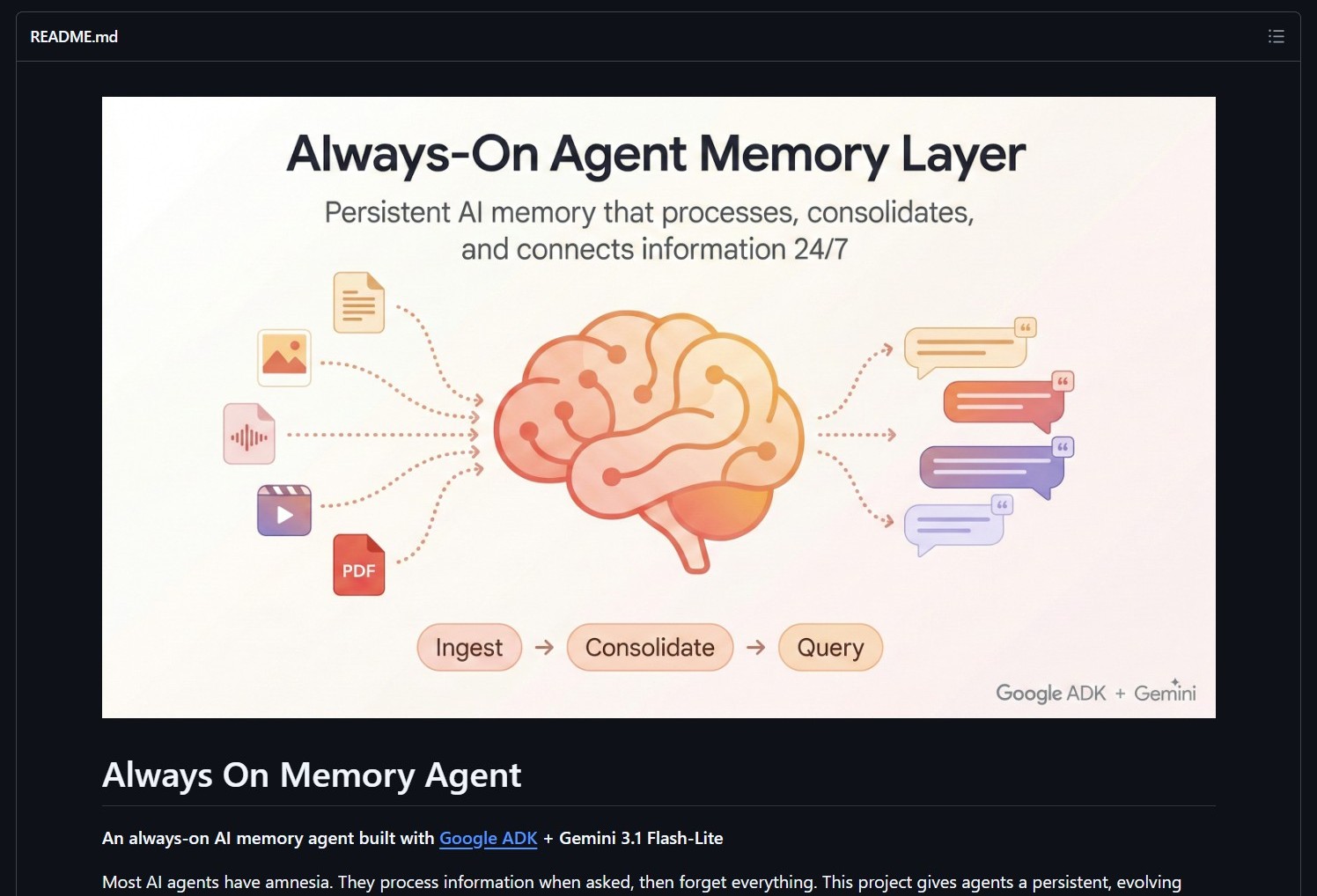

Google Agent Development Kit: Always-On Memory Agents with Gemini

Opened this GitHub repo expecting the usual boilerplate, walked away thinking about how elegant the solution actua...

Google · AI Agents

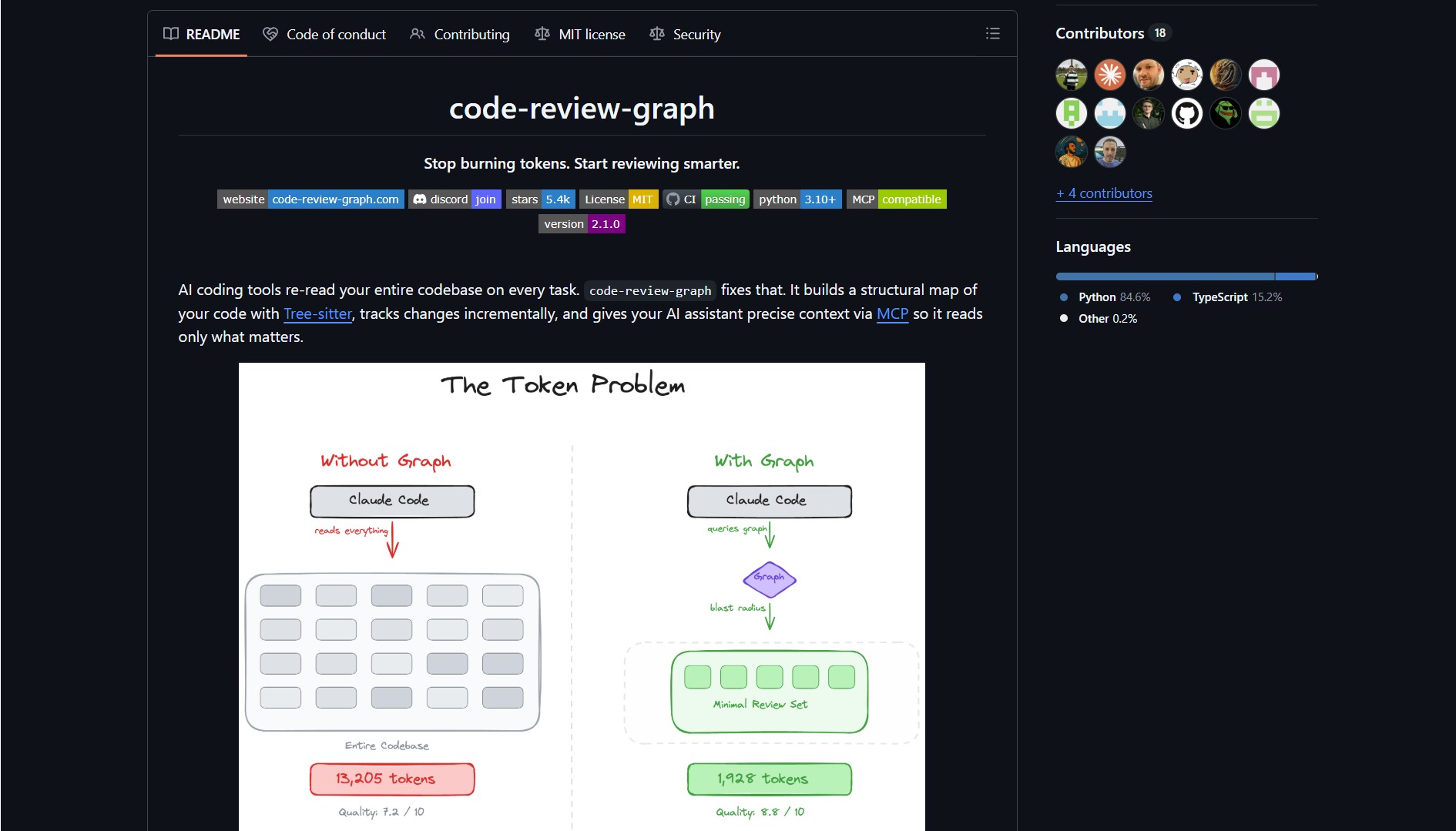

Code Review Graph: Local Project Graph for Claude Code Context

Been struggling with AI coding assistants re‑reading my entire codebase on every task for months, then found this ...

AI Coding · Code Analysis

Flash-moe: Stream 397B MoE Models from SSD on MacBook Pro

What made me stop scrolling: flash‑moe runs a 400‑billion parameter model on a MacBook Pro by streaming weights fr...

Local AI · Apple Silicon

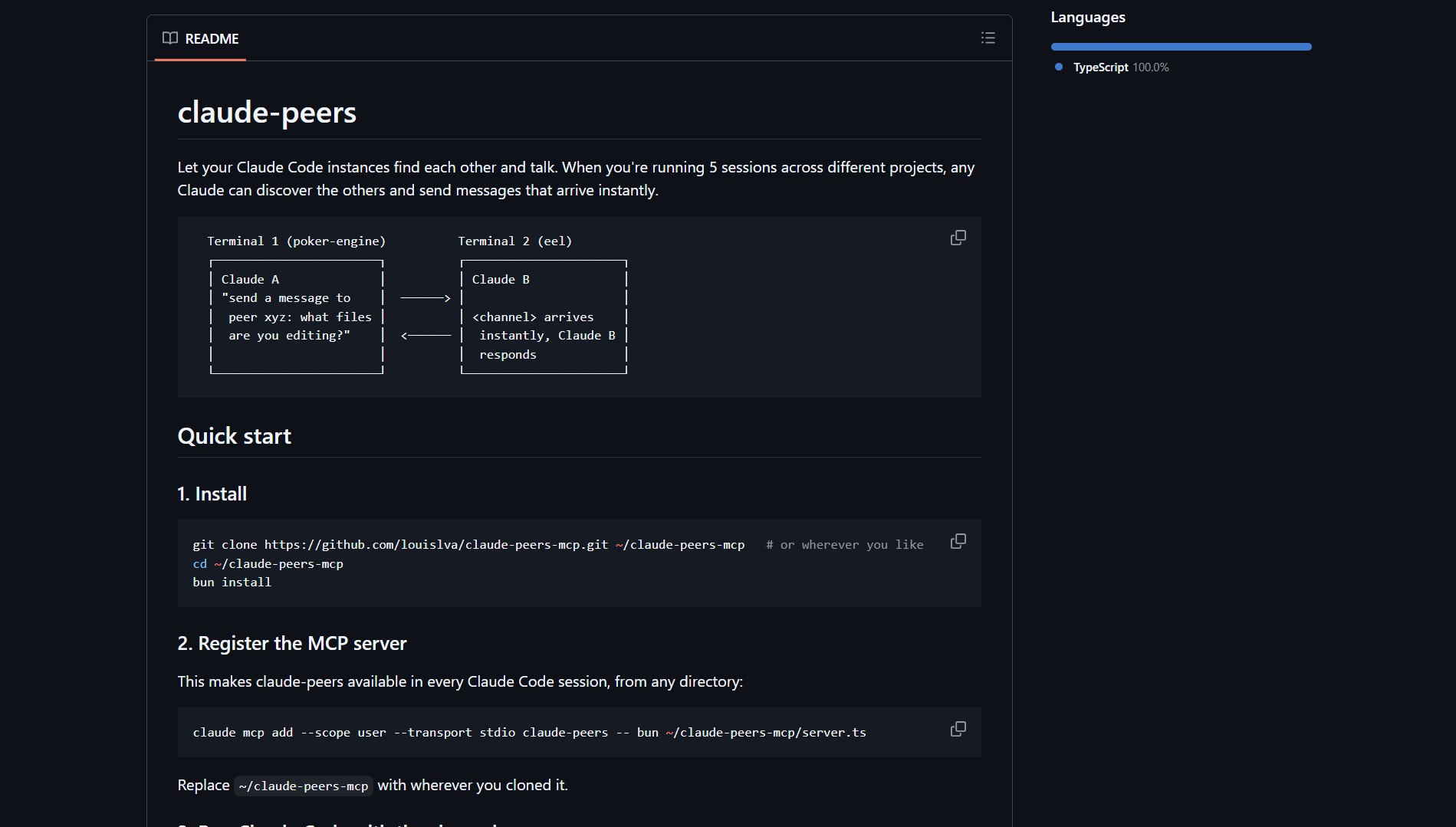

Claude-peers-mcp: Real-Time Communication Between Claude Code Instances

What caught my attention about Claude‑peers‑mcp wasn’t just that it connects multiple Claude Code sessions, it’s w...

MCP · AI Agents

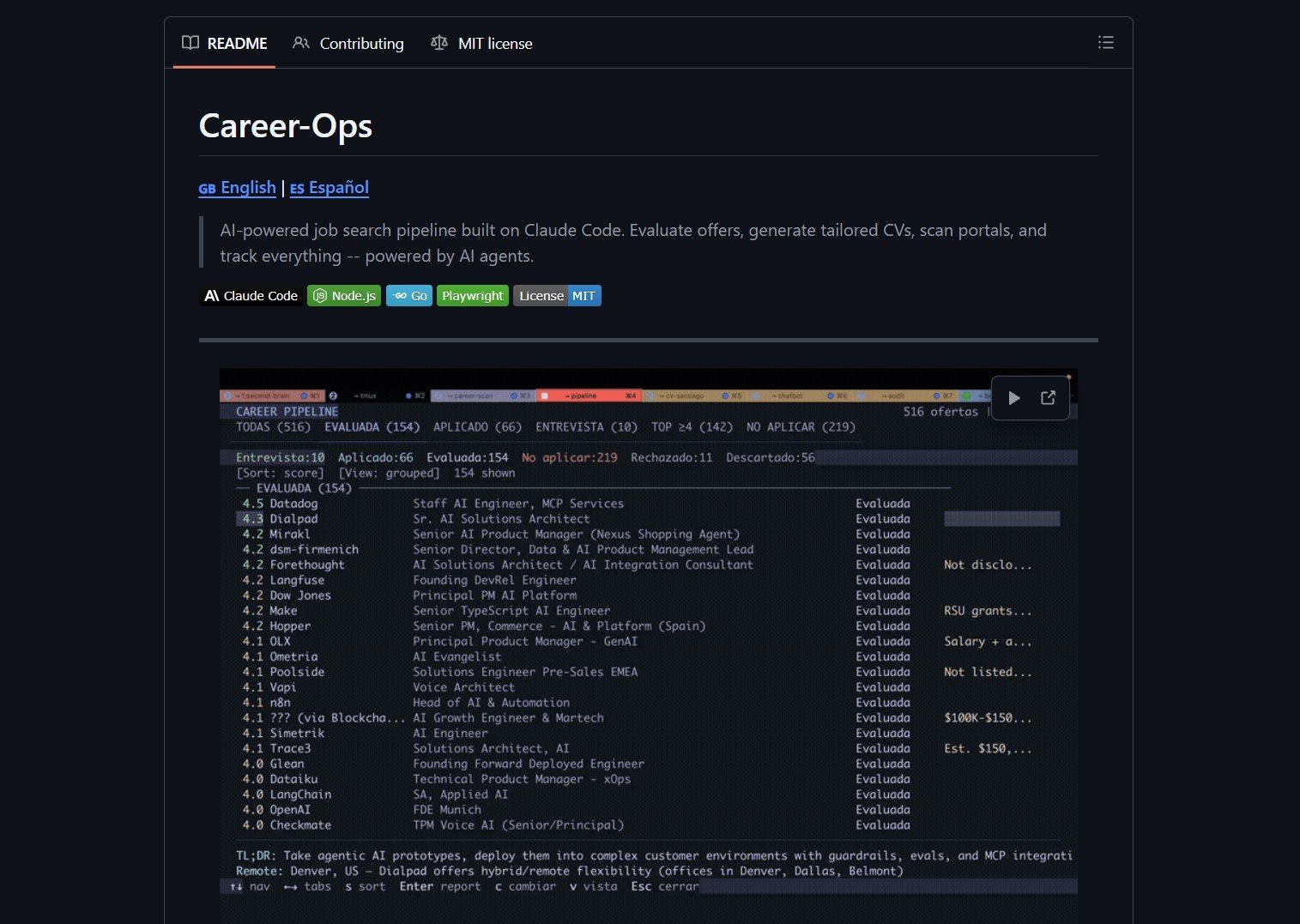

Career-Ops: AI-Powered Job Search Command Center

I ran into Career-Ops while browsing GitHub for job search automation tools, and what caught my attention was its ...

Job Search · Automation

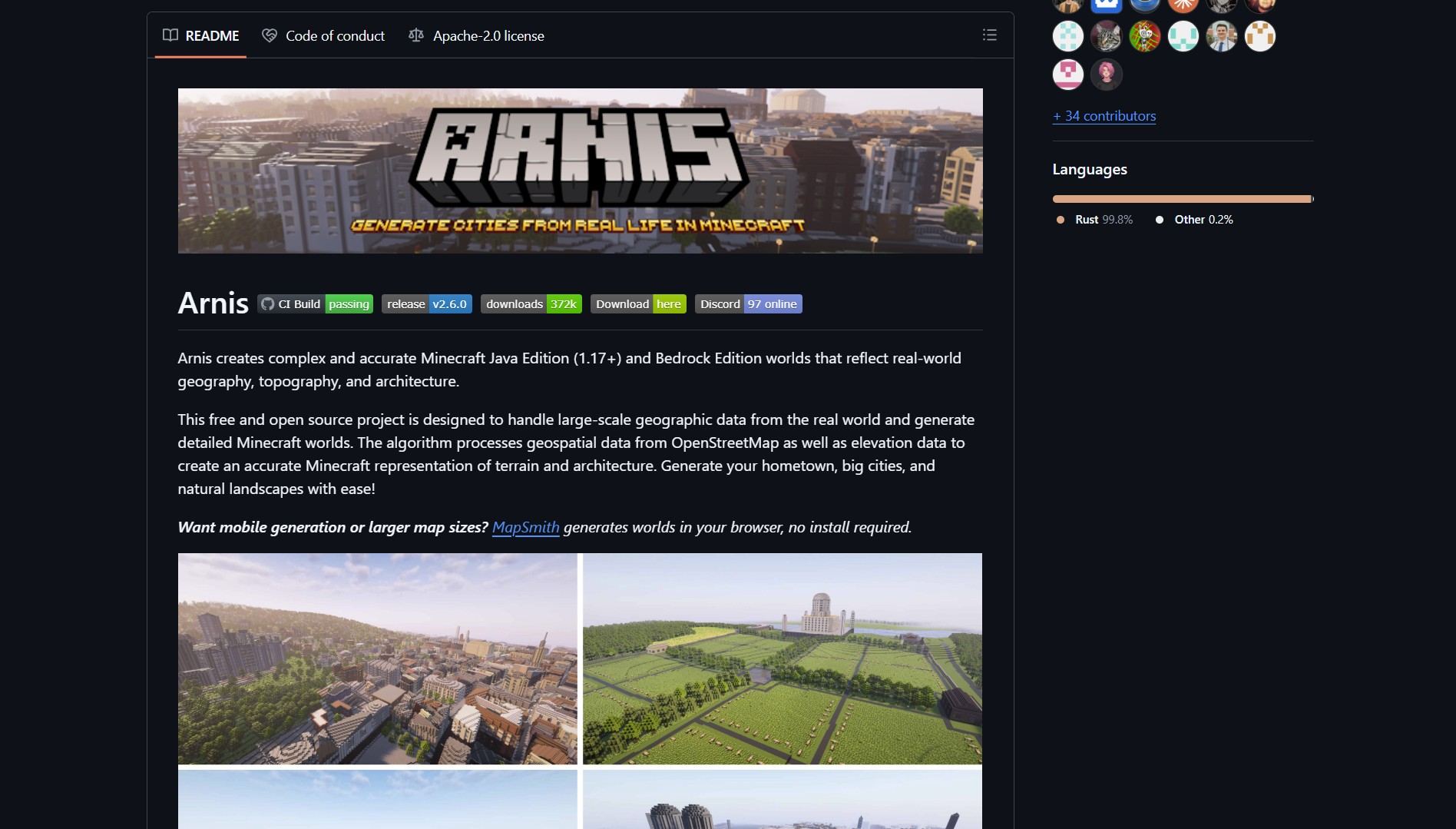

Arnis: Turn Real-World Locations into Playable Minecraft Maps

Saw this repo and realized someone had finally solved the problem everyone pretends isn’t annoying: manually recre...

Minecraft · OpenStreetMap

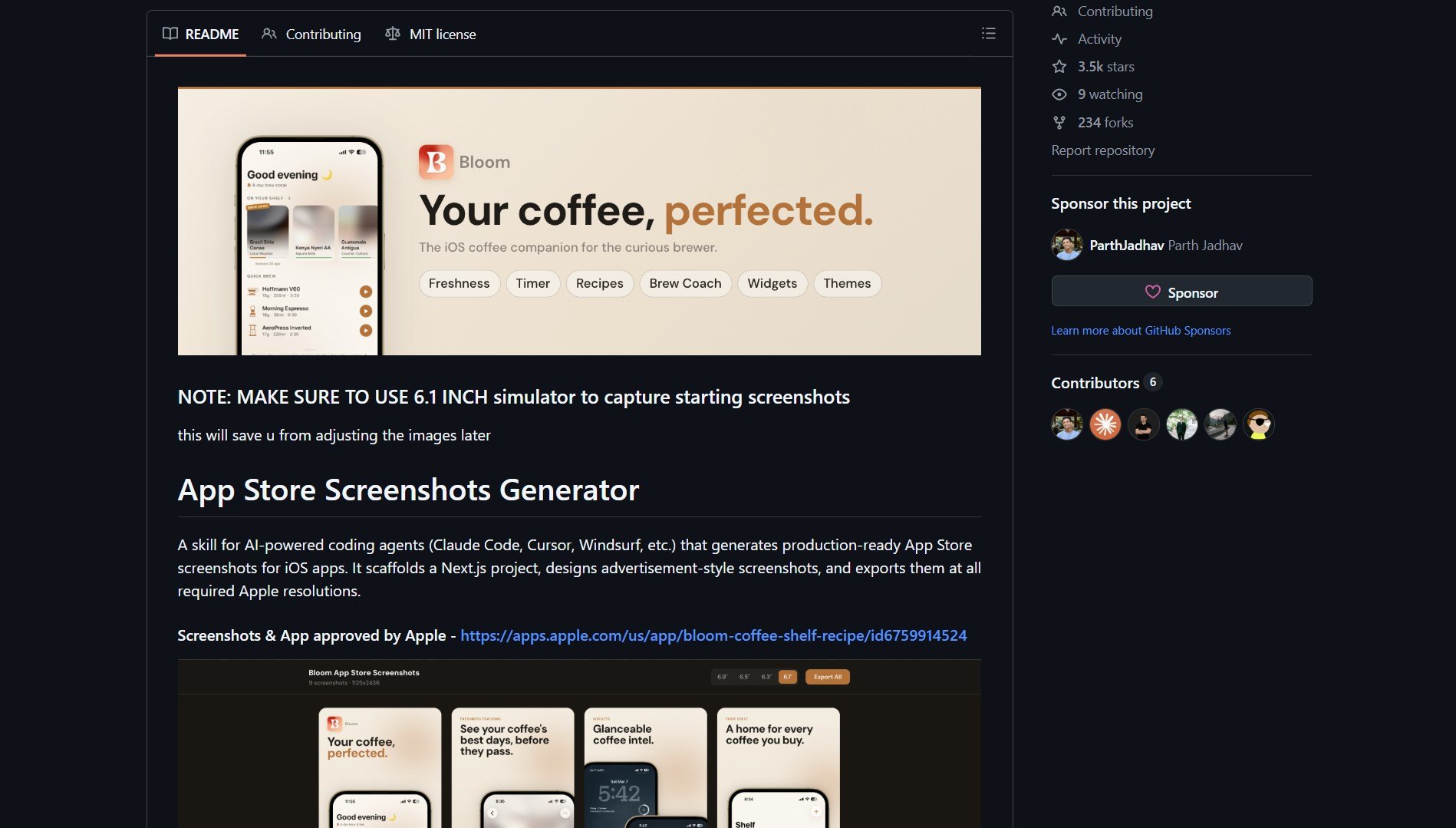

App Store Screenshots: Automate iOS Screenshot Generation with AI

Saw this repo and realized someone had finally solved the problem everyone pretends isn’t annoying: manually cropp...

iOS · App Store

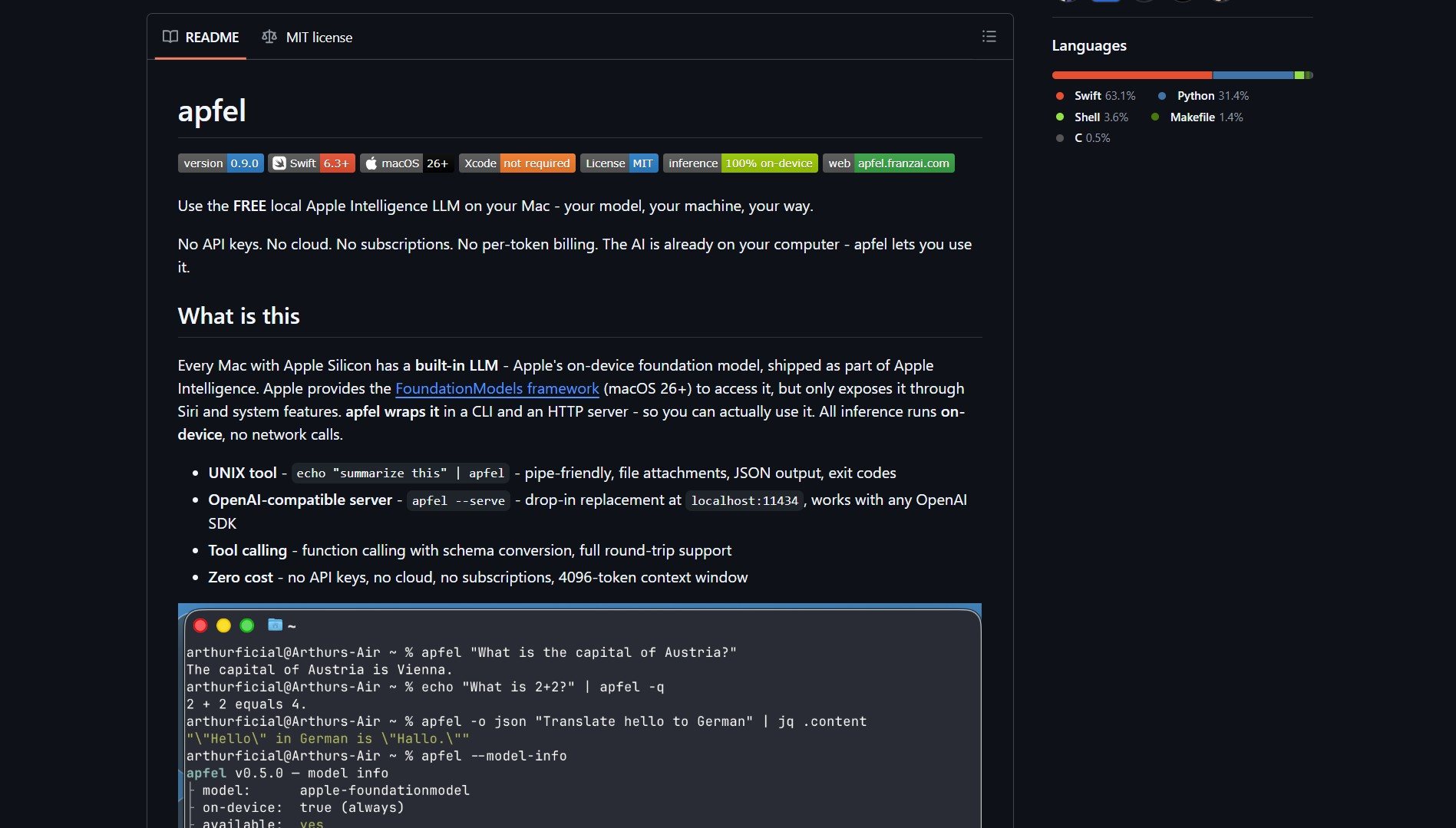

Apfel: Unlock Apple's On-Device Language Model

I ran into Apfel while browsing for local AI solutions, and what caught my attention was its premise: your Apple S...

Apple Silicon · Local AIWhy Git After Work

Stop drowning in noise

GitHub has 500 million repositories. Most of them are abandoned, broken, or irrelevant. Git After Work cuts through all of it and shows you only the repos that cleared the bar.

No more rabbit holes

You open one repo, click three links, and lose an hour. We do that digging for you. Every listing comes with a curator note so you know in ten seconds if it is worth your time.

Tools you actually use

Most repo directories are organized by programming language, not by what the tool does for you. Git After Work is built for builders, not archivists. Find what you need by problem, not by syntax.